Just when you thought it was safe to call yourself Turing-complete.

Veronica seemed to be humming along nicely with her new RAM, and I was chugging away on her next set of parts. However, I started noticing strange behaviour when running test code. Things were getting a little erratic. Simple code that was clearly correct would fail to execute properly. Then the RAM test (which runs automatically on boot up) started failing sometimes. Then, she started failing to boot up at all about half the time. Something was very wrong, but with so many parts, it’s hard to know what.

When faced with a tricky problem in a complex system, a good first step is to isolate some variables. Humans don’t actually solve hard problems. We reduce them into a hundred simple ones and solve those instead. For a problem like this, form hypotheses about what might be wrong, then start testing each hypothesis to eliminate or confirm it.

The most likely culprit for my problems was the last thing I added, namely the RAM Board. The problems were new, so it made sense to aim my blamethrower on the newest part. To check that, I wrote some test code that didn’t require RAM. It just booted up and did some stuff onscreen entirely from ROM. As long as you do everything with registers and don’t try to use the stack, you can run quite a bit of 6502 code with no RAM. That code worked perfectly, so the RAM was starting to seem like the problem.

There was one other possible culprit- my shiny new backplane. Luckily, that variable was easy to eliminate, because I kept the old one intact, which was known to be reliable. I swapped in the old backplane, but the problems persisted. Further code tests confirmed that everything was fine until I tried to use RAM (either by calling subroutines, which uses the stack, or by reading and writing global variables).

So, assuming the RAM board is the problem, I started doing some very basic debugging on it. I physically inspected the board for problems, tested for shorts, double-checked continuity of all the connections against the schematic, and so forth. Everything seemed correct. However, all the evidence pointed to this board being the problem, so I pressed on. I wondered if it was a particular range of memory that was flaky. That would suggest certain address lines were bad, or one of my two RAM chips might have issues. So, I changed my boot-up RAM test code to show me which address actually fails, instead of just showing an error message:

The result was interesting, but mostly inconclusive. It was a different address each time, and the failures were all over the range of memory. So, this is not an isolated problem, which probably means my address lines and both chips are fine (or both chips are bad?). I was at an impasse.

When in doubt, start rolling back to first principles. I knew this RAM design worked, because I had it running perfectly off a breadboard. The design should be sound, but the implementation seemed to be flawed. Yet, I had verified the implementation five ways from Sunday. Well, when debugging a hard problem, remember that no assumption is sacred. When the likely suspects are exhausted, start questioning things you’re sure are correct. In this case, I needed to go back and reverify my design. Maybe I was lucky before. Maybe I hadn’t built my schematic exactly, so the etched board was wrong. Who knows.

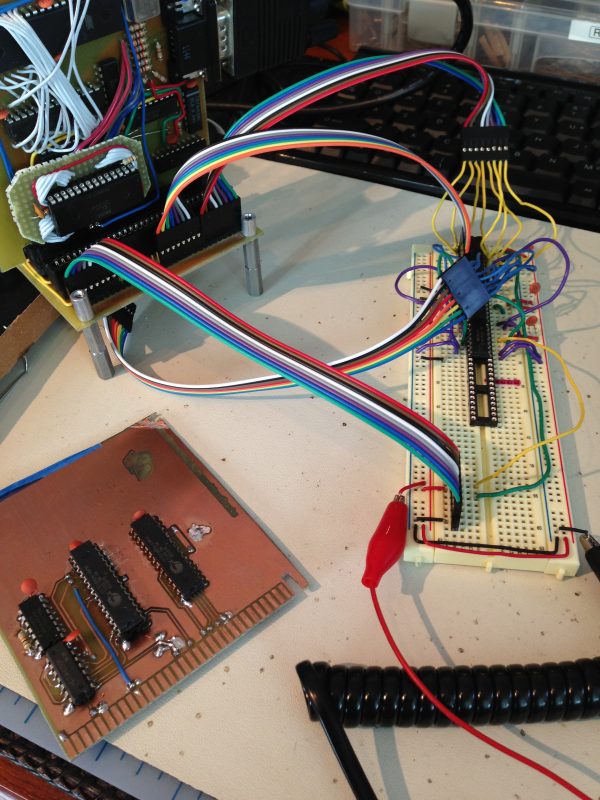

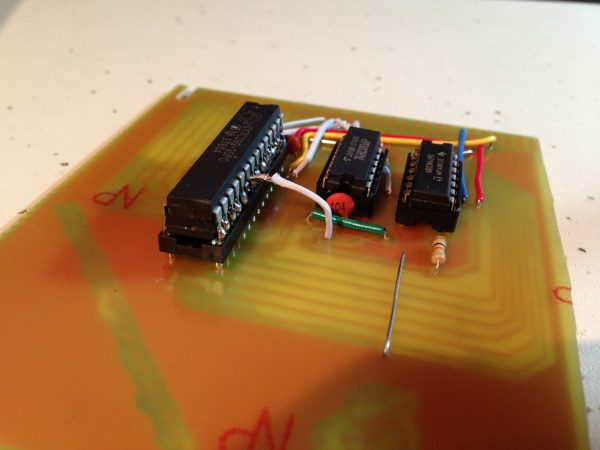

So, out came the breadboard and the Big Box O’ Chips.

Luckily, this sort of thing is actually quite easy for me to do, thanks to those debugging headers I stuck on the end of my bus on the backplane. That was a whim that has turned out to be one of the best decisions I’ve made on this project.

So, after all that, the RAM still didn’t work. However, some poking around with the logic probe made it immediately clear what was wrong. I had bad connections on several pins on the RAM and the decode logic. There was noise everywhere, and current draw was erratic on the power supply. Basically, I had bad connections all over the place. I was forced to admit that this loyal old breadboard is done. It’s had a hard life, having prototyped every single project you see on this site, but it’s utterly worn out. It was a discount cheapo board to begin with, and frankly it has given me grief with noise and a few flaky connections from day one.

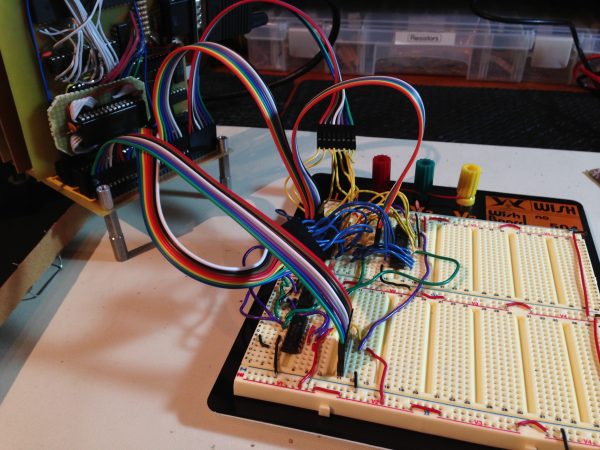

I did some research, and tried to find the highest quality breadboard I could find. The interweb’s consensus on that seems to be Wisher’s products, so I grabbed a WishBoard from Jameco. This particular model is specifically designed for IC-heavy projects, and I’m quite impressed with the quality. You can immediately feel the difference when you insert wires. Connections are confidence inspiring, instead of the “I guess that’s in” feeling I get from the cheap breadboards.

Then I rebuilt my RAM board again on my shiny new breadboard.

Survey says? This time, the RAM board worked perfectly. Huzzah! So, the design was sound. This was clearly an implementation problem.

At this point, I opted to cut my losses on the old board. It was an exotic double-sided board with handmade vias, none of which I had ever attempted before. The chips were all soldered down on both sides, so they were difficult to test (and could very well have been cooked with all that hand soldering). Any of a thousand things could be wrong, and it seemed easiest to just make a new board, using my older single-sided technique.

I also figured I would simplify the layout to make it easier to get right. I decided it’s time to ditch the little 32k SRAMs. I’ve been using them because they’re easy to get, and because I liked the old-school feel of multiple RAM chips lined up on the board. The 1980s computers had to do a lot of decoding and other work so that they could use large blocks of smaller chips (which were cheaper). I wanted to pay some homage to that. Well, screw homage. I just want it to work.

My loyal readers here had assured me that larger SRAMs in DIP packages are plentiful, and one very generous user even sent me some 64k chips; which the post office promptly lost. Our tax dollars at work. Well, someone please correct me if I’m wrong here, but I did a lot of looking, and I don’t think SRAMs are available in DIP packages larger than 32k that are usable for main system RAM on a reasonably fast CPU. The issue isn’t actually size, it’s speed. There’s plenty of RAM in the 150ns range, but that’s quite slow. You can get away with it at 1Mhz, but I’d like the option to go faster, and I’d like to stock one set of chips for all purposes. The video memory, for example, is hammered at 10Mhz, so even the 70ns stuff might be pushing it, by the time you get done with setup times, decoding, etc. There is some 55ns stuff out there, which I considered. There’s also lots of nice options in non-DIP packages, so I considered trying my hand at SMD soldering (which really doesn’t look that difficult).

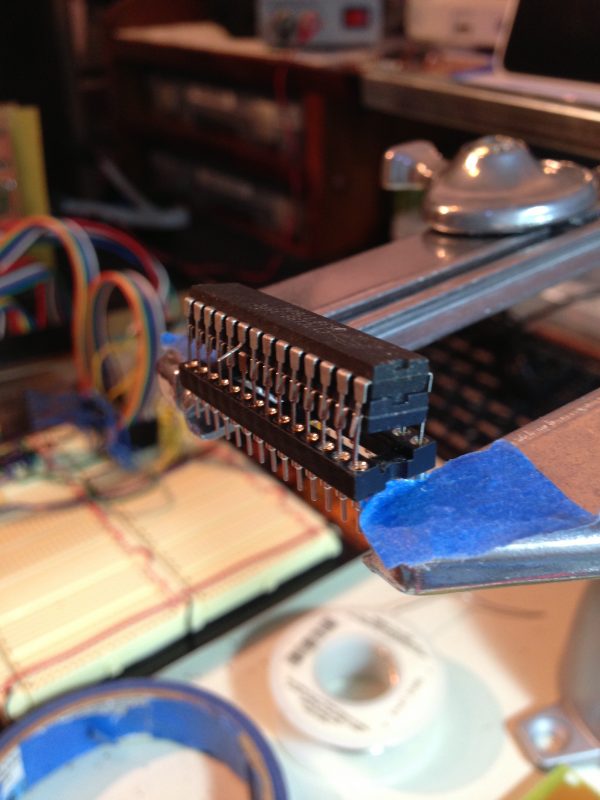

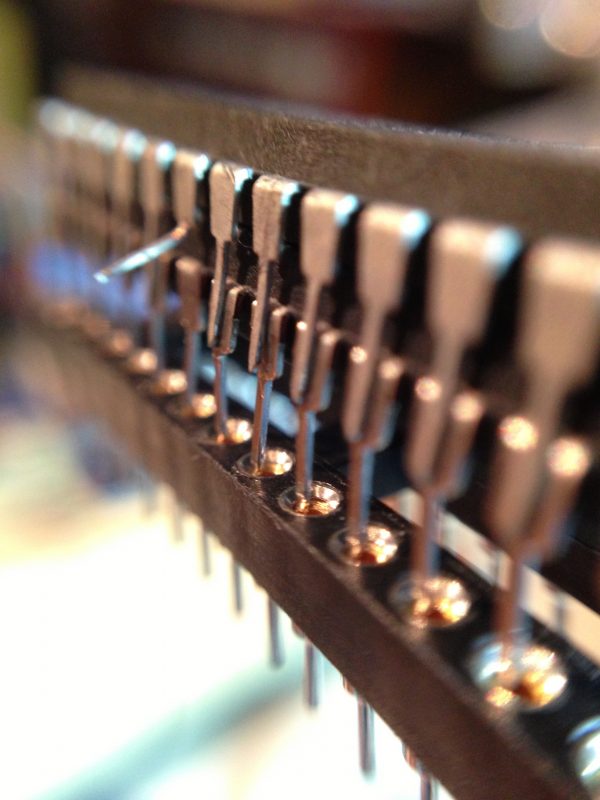

In the end, I went with the option that involved no waiting for parts to arrive- I double-stacked some 32k chips that I had on hand. I did the same thing on my video board, and it worked swell there. They’re cheap, plentiful, and rip along at 12ns. Plenty fast for any science project that might come out of Blondihacks Labs.

I thought people might be interested in the process of making a RAM chip stack, so I snapped some photos.

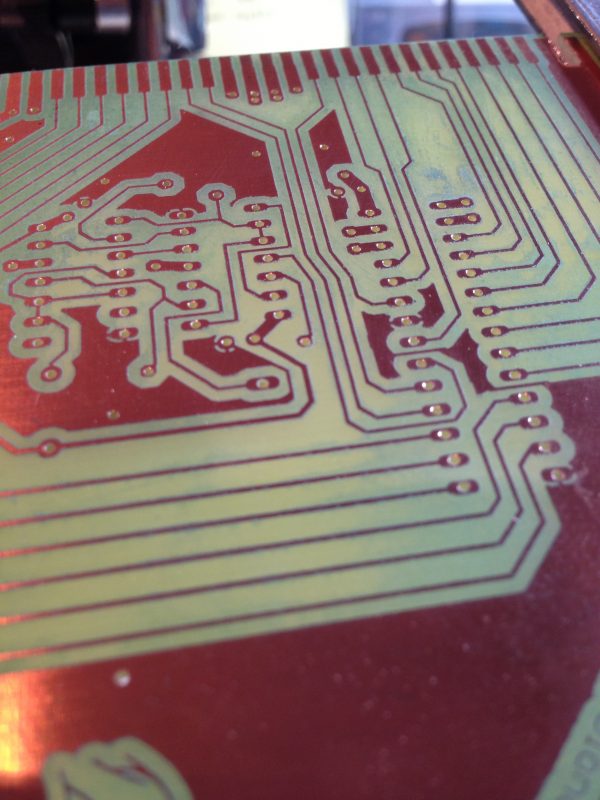

So, with one chip eliminated, my design got a lot simpler, and I could knock out a PCB in a couple of hours.

So, shiny new board all built, basic tests all passed, insert it into Veronica, fire it up, aaaaaaaaand….. FAIL. That’s right, the new board had the same problem as the original board that started all this.

What. The. Hell.

You might think I just wasted a whole lot of effort (and in some sense I did), but honestly it’s all part of the debugging process that you have to go through sometimes. By doing all this, I was very very confident that everything on that board was right. Those variables were all eliminated. That left only one culprit, no matter how improbable it seemed- the connection to the backplane.

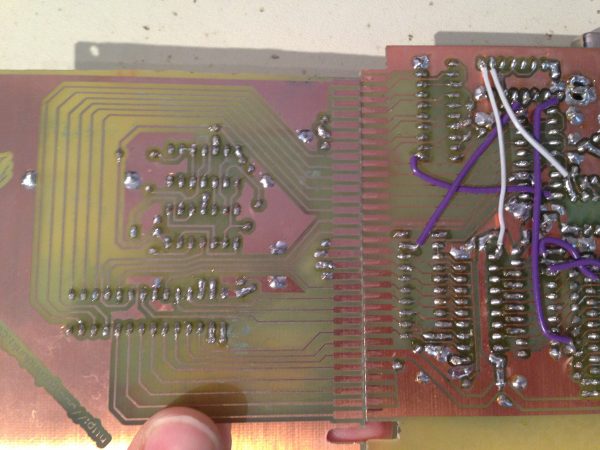

Above is a photo of my new RAM board (left), held up along side my VGA board (right). Notice anything? There’s a very slight size error in the pins of the RAM board. This error accumulates over the length of the board. At the bottom, everything looks correct. However, by the time you get to the top, the pins are out of alignment by about 40% of their width. That’s enough that they don’t line up with the backplane connector pins- sometimes. Yes, if you’re careful, there’s enough tolerance that you can get all the pins to line up. That’s why the original RAM board was flaky- it depended how the board had been jostled and how it happened to line up with the connector that day.

This is a textbook example of how, in engineering, no assumption is sacred. I’ve etched probably a dozen Veronica boards with that same edge connector template, and have never had the slightest problem with it. It has been bulletproof. So bulletproof that in all this debugging, it never even occurred to me to question it.

Well what happened here? To put it simply, I moved. Yes, I moved to a new residence recently, which means I now do my printing at a different FedEx Office branch. This new FedEx’s printers have a slightly different scale than the old ones. Of course I checked that- I’m not nuts. I check every PCB mask I make by lining up chip sockets on the paper, to make sure the printer hasn’t scaled it at all. Well, it turns out that the scaling was small enough that it didn’t show over the length of a chip socket, but does show over the length of the entire PCB. Hence the result you see above.

Ultimately, I need to build this PCB a third time. However, I want to get on with other things, and if I’m careful, this board can be lined up such that it works (as long as you don’t touch it too much). For now, that’s what I’ll do, until I have the energy to rebuild it.

If you’re not living on the edge, you’re taking up too much space, am I right?

Ah, one of those “assumptions are the mother of all fuck-ups”-situations. Glad to see that not only software engineers have to deal with it, and even more glad that you found the culprit 🙂

Wow… I didn’t see that one coming. Slightly different printer scaling? Whoda thunk!

Thanks for the great post, as always. I love hearing about Veronica’s teething pains and first steps.

Indeed. It’s been my experience that laser printers all have a slight baked in scaling (at least the commercial ones, anyway). I’ve been told this is to prevent accurately photocopying currency, although that could be an urban legend.

I compensate for this by applying a scale factor to the print operation in Eagle (it has an option for that). It never occurred to me that a different FedEx Office (which uses the same printers) might have a different scaling. For my next board, I’ll need to run some tests and recalibrate my scalar. Fun times.

That’s why I like having a laser printer on my desk. I can change the scale factor in the print driver. Most applications print menu have an enlarge/shrink with a variable percentage, usually adjustable in 1/10 or 1/100 of a percent. So I print out my board and measure it against the parts (I use perfboard to size up those long .1″ spacings) I can keep reprinting the thing on my desk till it’s perfect. (once I know the fudge factor, it should stay the same for the same S/W – printer combo).

BTW, do you have a “free cycle” chapter near you? Someone near me wanted to get rid of an HP LJ8000 series printer. I picked up the thing so I can now print schematics on LEDGER sized (11×17) paper. It’s a huge heavy beast and a new toner pack will cost me more than the laser printer I bought brand new, but these toners will last me FOREVER (10k sheet capacity?) The one in there is still good. I’ll have to vacuum the thing out to get rid of all the loose toner that is dirtying up the print output, but other than needed a good cleaning it was worth the price!

My first laser-printer was an IBM for which I had to make a driver. The quoted 300DPI was very very close to perfect.

If you are printing to transparency sheets, I have read that the thermal expansion and subsequent cooling/shrinking can vary from vendor to vendor, sheet to sheet, and printer to printer (might also change depending on whether the fuser was just warmed up or been on a while). So I wouldn’t be too quick to blame the printer change alone. I can’t remember the original source, but he mentioned that he used an inkjet printer for transparencies just for this reason.

what a great read! I always like hearing about troubleshooting woes and solutions, but that last picture — a perfect caption to sum it all up.

Thanks, Lance! We aim to please here at Blondihacks World Headquarters (which is totally not my spare bedroom).

Great post! Made my day!

I definitely smiled and lol’d. Thanks for all the details, including the fails (I’m too shy to write about the fails, that’s why my website has so few entries hehe). Always great to see you solving problems in unconventional ways, like stacking chips.

Just wondering though. It’s probably easy to print a board that has a known expected size, and determine the right scale from the actual printout. But then the next problem is going to be how much variation you’re going to see in that scaling. For all you know, the scaling might be 1.3% (40% of one pin over the length of 31 pins) on Mondays, and some other percentage on other days, depending on the time of day, how many other documents the printer has done, evilness of the software engineers who wrote the embedded software for the printer (or their boss)…

Are you (planning on) taking a laptop into the store so you can scale it “on the fly”? i.e. “Print this picture of a ruler for me — Thanks, now print this PCB image”. Or do you make two trips?

I wonder if it wouldn’t be cheaper and easier in the long run to just pick up an old laser printer from eBay and print your own transparents. Or do you already do that?

As usual, keep up the good work!

===Jac

Good questions! The first time I did this, I made a couple of trips to establish the right scale. That was easy because I lived two blocks from the FedEx. Now I have to drive, so that’s out. Instead, I actually use the copier’s scale function. I find it works very well, actually. Each board I make from now, I’ll gradually refine the scale on Eagle’s output, but if it’s not quite right when I print it at the store, I print another one using the scaler on the copier.

To verify the size, I bring some headers and chip sockets with me, and line them up on the paper. An error of 5% can be seen that way, and anything less than that is close enough. Or it has been until now. This time, I will bring older PCB masks with me that I know are the right scale, and compare the PCB edge connector portion. If that matches, then the whole board is the right scale.

I’m avoiding buying a laser printer because I just don’t want one around. I only need one once every couple of months or so, and they take space and stuff. I just don’t like piling up crap I hardly use. 🙂

What I’ve found useful for this sort of thing is to print out a page with a square on it that’s a known size, and measure it with a high-quality steel ruler. You can get a pretty accurate scaling that way.

I’ve also noticed while doing this that printers are not necessarily quite the same scale in both directions. So a scale that gives you the right connector pattern crosswise on the paper might give you a slightly off connector when oriented longways.

You may also want to print crop marks, and measure the distance between those.

As for a laser printer, it’s true they take some space, although you may find some smaller ones. On the bright side, they don’t waste toner like inkjets waste ink, so you can use them as little you need without incurring extra costs.

“(…) when debugging a hard problem, remember that no assumption is sacred” — wise words. When debugging software, I use a variation of that mental routine, which goes like this.

“It never worked. Don’t tell me it worked yesterday — it didn’t. It never did. I don’t care if I saw it working myself, IT. NEVER. WORKED. And now I’m going to take a look at this program to find out why it doesn’t work.”

I call it the Orwellian method, since I’m rewriting the past. More often than not, I find and fix a bug that, if you think about it too much, means the program could NEVER have worked. And lo and behold, the bugfix made the program work. Because it never worked.

I have another enginering saying I use when debugging, a quote from Sherlock Holmes

“Once you have eliminated the impossible, what ever remains, however improbable, must be the truth” How many times has he got that right!

BTW, I have some 32 pin 128 KByte, byte wide srams in 600 mil packages at 100ns and 70ns. They exist! Would you like one?

I think we already pointed out the existence of the AS6C1008-55PCN and other fine widely available through-hole 128K SRAM chips to her before 🙂

===Jac

Yes, and I very nearly bought some this time, as mentioned in the article. But, since I had the speedy 12ns ones on hand, I opted to use these.

100ns is not fast enough for a 10Mhz 6502, which is where I’d like to get to someday. It’s also not fast enough for the 20Mhz VGA board. 70 or 55 probably would be, but I’d rather stock something fast enough that I never have to worry about timing issues with the RAM.

55ns should be just fast enough for 14MHz. For 20MHz you would probably need something close to 20ns.

Make sure the timing values on the chips are what you think they are. I remember from the days of the IBM PC/XT that DRAM DIP chips were often marked e.g. “-15” for 150ns (not 15ns). I think it must be very difficult to make really fast SRAM memory; I’m not sure but I think the chip I mentioned above is the fastest version of the 128KB chip that Alliance makes in DIP format. For faster versions you need an SMD version.

On the other hand, the fastest 6502 that I know of is the WDC 65C02S14 which may run at 20MHz but is spec’d at 14MHz.

Just sayin’ 🙂

===Jac

Yah, the 12ns spec comes from the datasheet, not the chip markings.

These chips seem to be intended for backside or inline cache on mid-range 486 chips from the 1990s (the last use I know of for DIP chips this crazy fast). I think they maxed out at 64k, and were quickly replaced with on-die caches, and SMD components, so the 64k ones are harder to find.

Next RAM-related project I use will almost certainly be SMD chips. There’s little reason not to use them, and the options are a whole lot better (and cheaper).

Readers of the blog have told me you can get the 64k ones by buying old 486 motherboards, but that was more messing around than I wanted to bother with.

Sorry I’m late to this episode…

Those 64Kx8 SRAMs I tried to send you (#$%&ing USPS) were, in fact, salvaged cache RAM from old 486 mobos. If you want to run a chip search, they were UMC branded UM61512AK-20 (yes, 20nS!). Unfortunately, the only 64K chip I have left is a 64Kx1 “Tag” RAM. The disappointing thing is that in addition to the 4 UMCs I sent, I threw in an extra 8 of the 32Kx8 (4 were 15nS, 4 were 20nS). With datasheets.

I still have a few of the 32Kx8 (15/20nS) left. If you say “please” (and the USPS can get them to you), I can send you some.

Note to fellow followers: These chips need to be 5V tolerant. Most of the chips I have on hand are either 5V or 3.3/5V capable. So double-check the specs before firing them off to Quinn, otherwise she’ll have to throw in some 3V3 regulators and buffers.

nop

Yes I also have some 300 mil wide ultra fast rams that were pulled from old motherboards “cache” memory.

BTW, I bought one of HP’s “personal” laser printers a few years back. I don’t remember the model # off the top of my head, but it’s one of those little cube styled ones. I got the duplexor option. It works well with the toner transfer process on the pc blue stuff from all electronics. In the long run it saves money over ink jet in the cost of the toner vs ink. Much less $ per page. Of course if you NEED color …..

Nice job debugging!

I remember stacking ram chips (years ago) on my Kaypro, to implement a 256k battery backed ram drive. Boy was that fun!

Really enjoy your writing–keep it up!

There are even 512KB SRAM in DIP packages, 55ns and less.

If anyone cares, I could look up the part IDs.

Sure, I would be interested in that. I didn’t have a lot of luck finding anything faster than 55ns in >32k DIP packages.

What? You mean all photocopiers don’t make exact copies? That would explain why my homemade dollar bills are rejected by vending machines and strippers! Will have to experiment with two dollar bills.